Overview

Project: Pigeon was a Hackathon project I worked on with four of my friends from school during November 16-17th 2019.

Our theme was related to climate change, so while looking through multiple sources and different Quora articles, we ended up sourcing our project based on acid rain.

What is Project: Pigeon?

Project: Pidgeon is a drone with an installed probe that allows the user to collect atmospheric information.

…but how?

The probe collects air samples from the atmosphere and then returns the collected data to the connected base. The base then can run algorithms that can determine locations where storms are more likely to form, and also where the atmosphere is more acidic intended to give authorities and the general public a faster time to respond and take corresponding action.

In a nutshell,

ph => time it takes until rain

time => location it will rain.

Neural Network => uses forecasts to predict chances of acid rain; uses altitude + jet streams to predict location

Probe => samples the air for Ph and possibly other content such as CO2

Development

Personally, I wasn’t the one who worked on the machine learning/neural network code. I was moreso in charge of the backend part, since that’s my forte. Although, here’s what we integrated that [tried to] make the magic happen.

Tensorflow.

- Tensorflow is a Python package that works with machine-learning-based projects. We trained our network with over 100,000 sets of data from different locations over time, their precipitation values, and the percent of acid rain. We were able to get an accuracy of ~78% with the limited sources we were given.

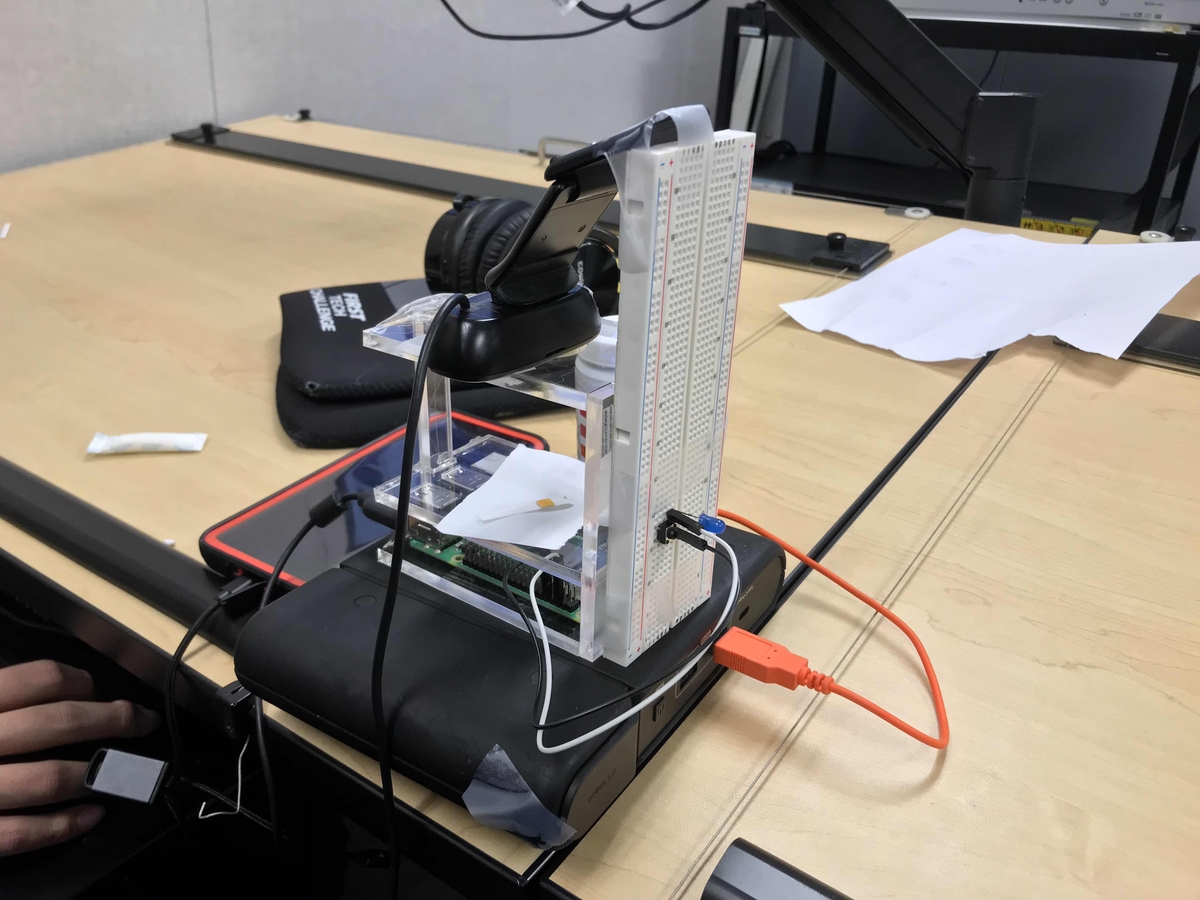

OpenCV.

- [Open] Computer Vision Python package. Used with the probe (which was a webcam) as a sensor.

Google Maps API.

- My part of the project. Utilize the Google Maps API Javascript package to display geographical data of the intensity of acid rain with heatmaps.

Working on the Backend

My job was to get the backend all figured out. My initial process was to implement the Google Maps API and take latitiude, longitude, and the weight value (of the acid rain), and then display the results on a geographical heatmap. Unfortunately, I ran into some issues so I couldn’t get everything all set-up in time. :<

I planned to implement the API using the @google/maps node package, along with using papaparse to parse the CSV (output file by ML) into JSON so I can implement the input from an array.

Originally, I was looking for any useful open-source selfhosting applications I could’ve utilized to help display the geographical data, and stumbled upon this program called Orion. After five hours of trying to get modules working, it became too problematic for me to continue setting this up.

That night, I planned to at least try to successfully configure the backend with the Google Maps API. However, I only got so far with my little experience with JavaScript. I ran into several issues that I couldn’t really figure out (especially on only two hours of sleep, whoops), but I definitely learned a lot about implementing node packages and working on that-world of JavaScript.

Future Considerations

I noticed that the first place team used this interesting Python package called Jupyter, which seems INSANELY useful for documenting interchangable data for projects…

If it takes more than an hour to get something working, probably consider another alternate open-source platform…

For Hackathons, you don’t exactly need to have the technicals working 100%. If you can make it look pretty, and make it appear to do what you intend for it to do, you should be fine for a bit. Judges won’t care if your “code just broke five minutes ago”. Judges only care about what works and doesn’t work at that very moment.

Plan your primary method of presenting ahead of time. If you’re planning to display most of your product on a powerpoint, consider how much a website would improve your evaluation… Will the judges even look at the website, and if so, how much would they look into it? For these type of scenarios, I wouldn’t feel guilty of using a static site generator to save some time. :)